According to a Google Survey if a web page takes longer than 3 seconds to load, 53% of web users will abandon it. Slow loading websites can usually be traced back to too large of files, or APIs that take too long to response.

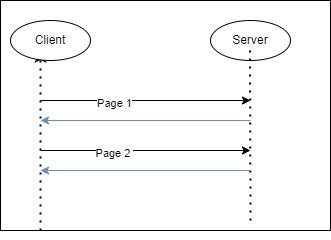

Often when you call an API there’s a lot of results to return which results in a lot of time being spent between the app server and the database server. Pagination allows us to reduce the number of results we return in a response to make it faster and easier to handle. For a website to load quickly, it’s API must be quick to respond and include everything it needs in a request.

What is Pagination?

When large lists of records are exposed through an API, we need a mechanism to control how many records are returned.

Paging is very common when dealing with collection/list endpoints. A collection or list endpoint should be any endpoint that is a plural noun like “books” or “activities”.

When the list of resources is small, fixed or well defined, or just not a lot of data it’s possible that the listing endpoint will not be paginated.

Under the hood, paging works like this:

Always remember that exposing endpoints is easy, deprecating or deleting them is difficult and in some cases impossible.

Most styles of APIs including SOAP, REST and GraphQL have some sort of paging technique.

Different Types of Pagination

There are a few different techniques that can be used to provide listing endpoints and how to paginate them. The most common techniques are Page-Based pagination (offset-based pagination), KeySet-Based pagination (index based), and Cursor based.

Like everything in software engineering, we’re managing tradeoffs based on the one we choose. Trading a problem for another problem as one of my university professors said.

Page – Based Pagination (Offset-based pagination)

This is the most common form of paging, it’s very easily implemented using SQL based databases. Essentially, the list of records is split into divisions that are called pages.

The endpoint accepts a page parameter that asks for the next page and a limit parameter (optional). When we look at the SQL query that’s executed it’s using a LIMIT and an Offset.

- The limit is the length of the page (how many records to return)

- The Offset is the number of records already returned.

Essentially if I were to do math, I would have a formula that’s (page size * page)

My query would look something like this:

SELECT *

FROM books

ORDER BY Id

LIMIT 20

OFFSET 100The database would then work by fetching N results and bringing them in ordered by the Id to return the records after 100.

| Pros | Cons |

| Jumping to a particular page is easy. If needed to get to page 50, no need to query the 49 pages before. | Database performance for OFFSET in SQL is not good. The database scans the entire table and counts N rows. |

| Stateless on the server-side. (REST API Constraints) | It’s possible that data can be repeated or be returned after it’s been deleted. |

| Simple to understand and debug | |

| Most libraries already support it; little need for custom code. | |

| Parallel requests with different pages is possible |

When I am brought in on a project to resolve why an API is slow, one of the first things I look after after database joins is how paging is implemented. The larger the offset gets the slower the requests tend to be.

KeySet-Based pagination (index based)

KeySet-based pagination uses a key parameter that should be used as the same key for the sort order. Often it’s the unique identifier (Id), and then a key parameter of since_id or since_created, etc.

The first request can’t contain the delimiter parameter because we don’t yet have it. Subsequent requests should then include the since_id of the last element.

For example, if my first API call returned 20 records and the final record had an Id of 25 I would then request /books?limit=20&since_id=25

The SQL query that would then be executed would look like this:

SELECT *

FROM books

WHERE ID > 25

ORDER BY ID

LIMIT 20This SQL query is way more efficient than using the offset because it doesn’t need to do a complete table scan. If the Id field is indexed it should be able to do most of the operation in memory.

| Pros | Cons |

| Database query is way more efficient because it uses a where clause and database indexes. | Sort order has to be the same as the key |

| Jumping to a specific page isn’t possible / parallel requests aren’t possible | |

| Items will be missing if added previous pages | |

| Multiple key parameters need to be potentially exposed |

Cursor-based Pagination

A cursor is a piece of data that points to the next element (usually forwards or backwards). The server returns a cursor pointing to the next page in each request. Sometimes, the server also provides a reverse pointer too so you can go back to the previous page.

Sometimes, the cursor is a blackbox so that clients can’t easily manipulate it. Usually it’s something like a serialized or encrypted Identifier.

The SQL query is dependent on the implementation, but often it’s similar or the same as KeySet-based Pagination.

| Pros | Cons |

| Implementation can change without introducing a new API version | Skipping pages isn’t possible. |

| Faster than using pages because no need for offset. | Implementation is complex. More than Limit/offset. |

| Debugging can be difficult. Have to unencode requests to see what’s going on. | |

| Items can be missing OR the pointer can be removed. |

Wrapping It Up

Pagination is hard – it introduces new problems each time it is implemented. Shopify originally used Paging and has deprecated it in favour of the other two methods.

Although paging is easily implemented with an offset and limit, there are potential database problems looming in the future. As you collect more and more data, it becomes more difficult to optimize it.

Also published on Medium.